AI Product Delivery

Mar 22, 2026

AI Product Kickoff System for Governed, AI-Assisted Delivery

This is the reusable kickoff system I use to move from idea to governed, AI-assisted product delivery with less drift, clearer phase control, and stronger validation.

AI Product Kickoff System for Governed, AI-Assisted Delivery

Who this is for

This is for builders who no longer want to start projects with:

“Build the app.”

It is for people who want a repeatable system for:

full product creation

phase control

AI-assisted implementation

governance across the lifecycle

validation before ship

That includes:

program managers

product leaders

technical founders

software architects

lead developers

implementation consultants

Core idea

Most AI-assisted projects break in one of three places:

Scope drift

The AI starts in one direction and finishes in another.Governance failure

Nobody owns dependencies, review gates, escalation, or benefit tracking.Validation failure

Teams confuse “generated” with “done.”

The fix is to start with a governed kickoff system, not a coding request.

That means every project begins with:

target state

non-goals

phase boundaries

implementation artifacts

validation rules

rollback expectations

branch and session discipline

release evidence

What I am accountable for

When I use this system, I am not acting like “the person telling AI what to do.”

I am acting as the accountable owner of:

outcomes

scope

governance

dependencies

validation

change control

release readiness

In practice, that means I own:

Outcome control

business goal

user outcome

project success criteria

Benefit control

what value the project should create

which phase unlocks which value

whether execution is still aligned

Stakeholder control

sponsor

approver

technical owner

review cadence

escalation path

Change control

what can change now

what must wait

what is drift

what triggers re-planning

Delivery control

phase gates

validation standards

evidence of completion

ship approval

The kickoff system

Every project starts the same way.

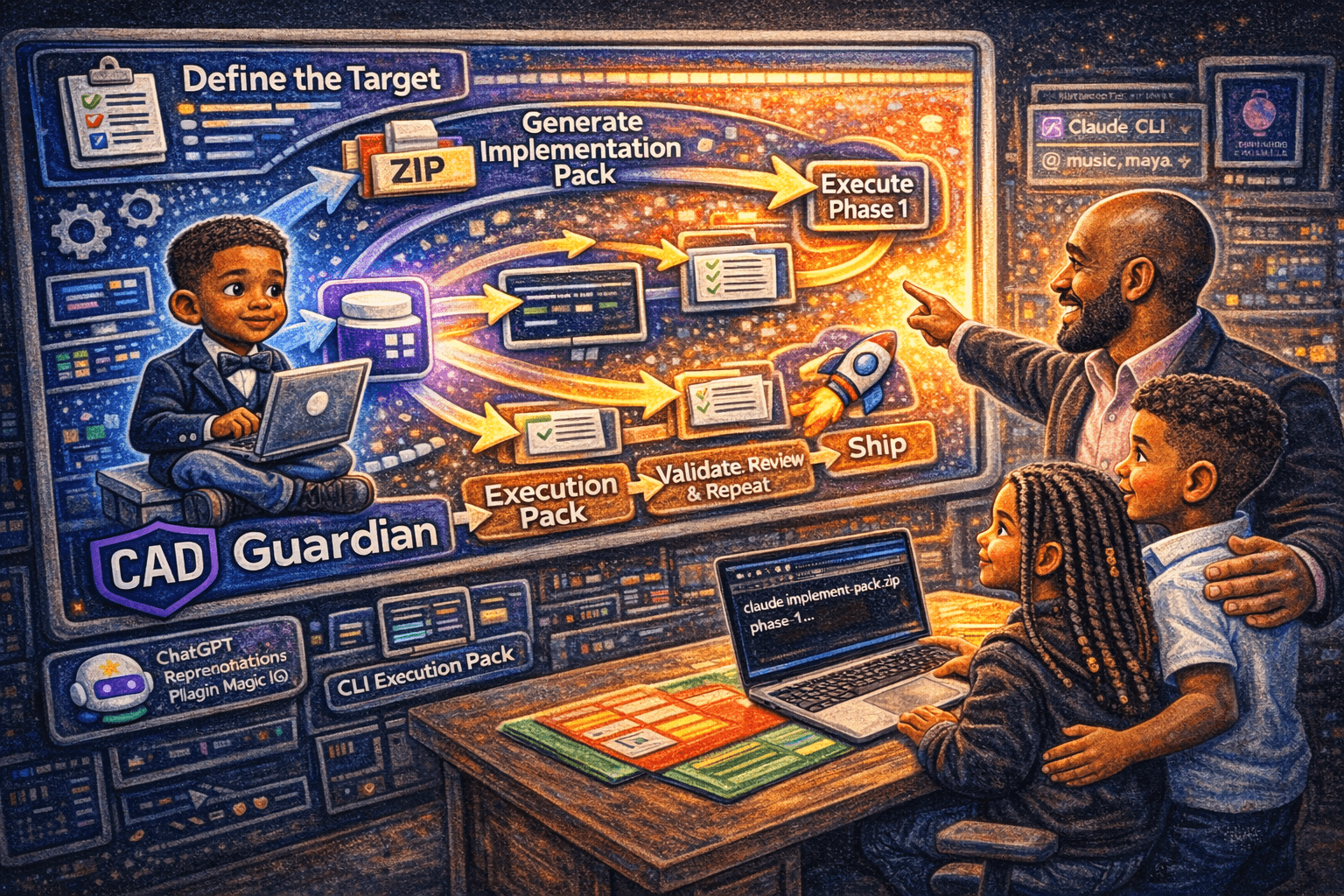

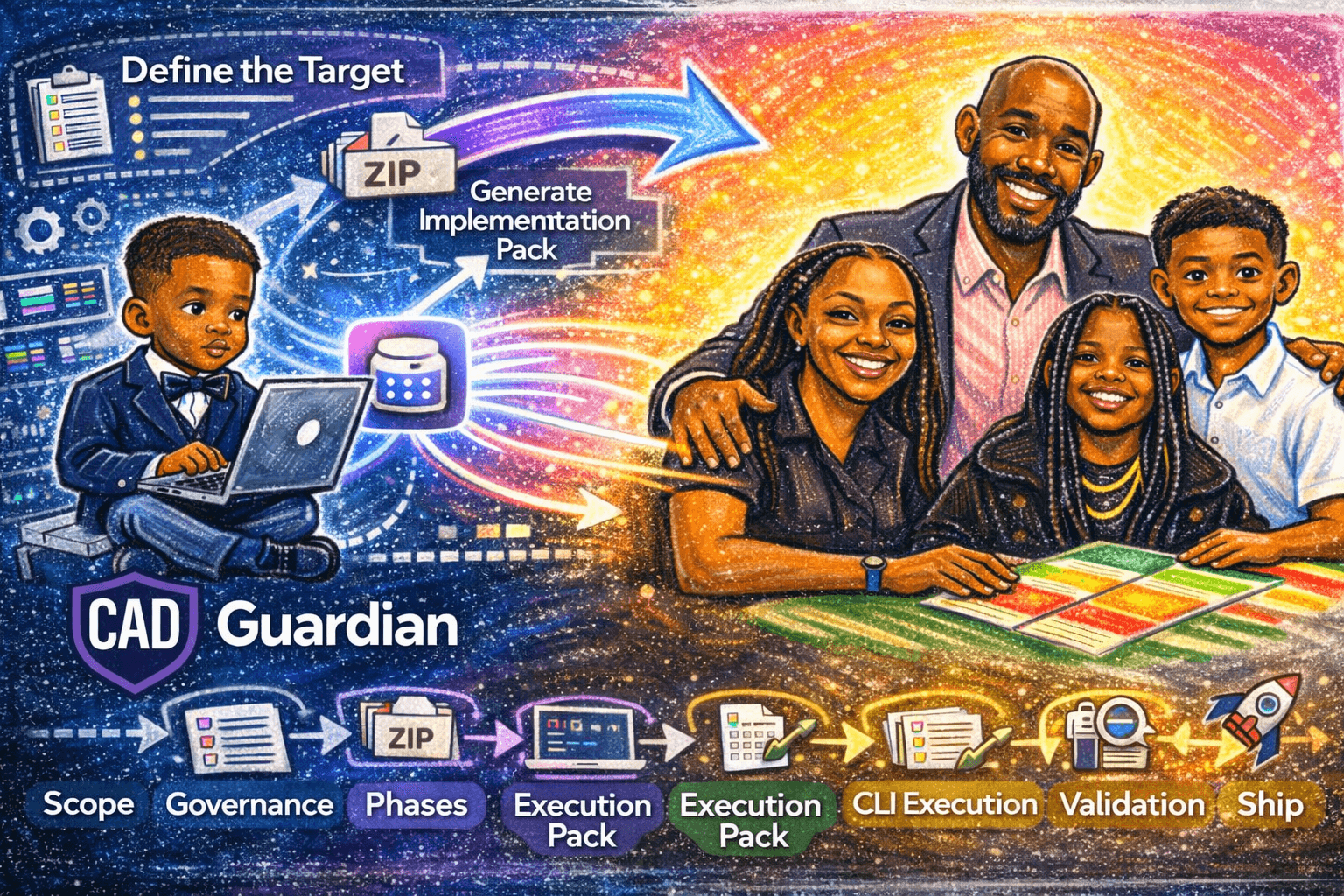

Step 1: Define the control surface

Before any build work starts, fill in:

Project name

Product thesis

Primary user

Primary business outcome

Success criteria

Non-goals

Constraints

Known dependencies

Primary risks

Decision-maker

Release target

Preferred AI surface

Required validation

This becomes the control layer for the entire lifecycle.

Step 2: Generate the implementation pack

The AI should create a zip or folder with:

00_MASTER_PLAN.md01_PHASE_1_[NAME].md02_PHASE_2_[NAME].md03_PHASE_3_[NAME].md04_PHASE_4_[NAME].md05_PHASE_5_[NAME].md06_PHASE_6_[NAME].md07_EXECUTION_MANIFEST.[json|yaml]08_[AI_TOOL]_PROMPTS.md09_SHIP_CHECKLIST.md

Step 3: Load the pack into the repo

Put the pack inside the repo so the AI can use current codebase context and phase instructions together.

Step 4: Execute one phase at a time

Each phase gets:

one branch

one AI session

one objective

one validation loop

one review gate

Step 5: Validate and close

No phase advances without:

files changed

commands run

validation results

unresolved issues

rollback notes

recommended commit message

Implementation pack standard

00_MASTER_PLAN.md

Must include:

project objective

target user

business reason

success criteria

non-goals

architecture boundaries

phase order

definition of done

ship criteria

01–06_PHASE_*.md

Each phase file must include:

exact objective

what changes in this phase

what must not change

files/folders likely affected

validation commands

acceptance criteria

risk notes

rollback notes

dependency notes

07_EXECUTION_MANIFEST.[json|yaml]

Should include:

phase order

branch naming convention

per-phase tasks

command blocks

validation blocks

expected outputs

08_[AI_TOOL]_PROMPTS.md

Should include:

planning prompt

execution prompt

validation prompt

review prompt

ship-readiness prompt

09_SHIP_CHECKLIST.md

Should include:

environment verification

build

type check

tests

deployment

analytics

monitoring

rollback

stakeholder signoff

release notes

Terminal-first execution standard

I recommend terminal-first execution because it is easier to inspect, easier to diff, and easier to govern.

Required AI behavior

The AI must:

read the master plan

read the current phase file

summarize scope

stop before editing

wait for approval

execute only the current phase

run validation

summarize evidence

stop

Prohibited AI behavior

The AI must not:

start later phases

opportunistically rewrite unrelated files

silently redefine the product

skip validation

push changes automatically

read secrets without permission

bypass approval gates

Required human review

Before commit, review:

git statusgit diff --statgit diffvalidation output

unresolved issues

Reusable kickoff template

Copy this into a fresh document for every new project.

What makes this stronger than normal prompting

Most AI workflows start too late.

They start with:

“Build X.”

This system starts higher:

“What is the governed path from target state to validated delivery?”

That improves:

clarity

reuse

portfolio consistency

stakeholder trust

implementation quality

auditability

Add-ons that make this stronger

If you want an even better system, add these files:

10_BENEFITS_REGISTER.md11_DECISION_LOG.md12_STAKEHOLDER_MAP.md13_DEPENDENCY_MAP.md14_PHASE_EVIDENCE/15_RELEASE_SCORECARD.md16_POST_IMPLEMENTATION_REVIEW.md

These improve:

benefit tracking

decision traceability

stakeholder management

dependency control

evidence quality

release confidence

continuous improvement

Recommended links:

Short internal version

I do not start by asking AI to build the product.

I start by forcing the product into a governed execution system.

The AI then implements one approved phase at a time, with explicit validation, evidence, and human review before commit.

Author box

Thomas Divine Smith II

Also known as tsmithcode and tsmithcad. I build AI-assisted software systems, product delivery frameworks, and architecture-driven execution methods that help teams move from idea to governed, reusable delivery.

See more at:

Final AI instruction block

FAQ section

Is this for project managers or program managers?

This is closer to a program-management operating model because it governs benefits, stakeholders, dependencies, phase gates, and delivery evidence across the lifecycle. PMI explicitly frames program management around benefits, stakeholders, and governance rather than single-task execution.

Can I use this with any AI tool?

Yes. That is why the model, tool, and execution surface are placeholders. The structure should survive vendor changes.

Why one phase per session?

Because long multi-phase AI sessions accumulate drift, assumptions, and context pollution. One phase per session makes review, rollback, and accountability cleaner.

Why terminal-first?

Because it is easier to inspect, easier to version, easier to validate, and easier to keep honest.

Can this guarantee better results?

It cannot guarantee outcomes. It can, however, dramatically improve consistency, reviewability, and alignment if you actually use it rigorously.